Recently, I was busy with one of the major data recoveries and unfortunately there was no backup. First of all, I asked for the SQL Server log data to recover their deleted data. After a while, I got it but the major problem was that the varbinary data was available in ntext data type column in the dummy table. As you guys know, SQL Server maintains its log data in varbinary format. I tried to convert it into varbinary and received the given below error message. After a while, I solved it. Given below is the solution.

Message Number : 529

Severity : 16

Error Message : Explicit conversion from data type %ls to %ls is not allowed.

Error Generation:

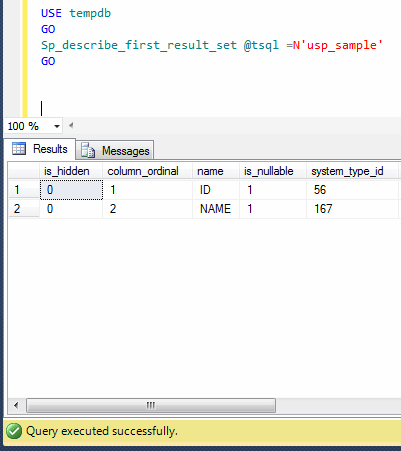

Let me create a sample table to demonstrate this error.

USE tempdb GO --DROP TABLE tbl_sample --GO --Create Table CREATE TABLE tbl_sample ( [Col_ID] INT, [Col_Ntext] NTEXT ) GO --Insert few records INSERT INTO tbl_sample VALUES (1,'0x7261726573716C2E636F6D') INSERT INTO tbl_sample VALUES (2,'0x496D72616E') INSERT INTO tbl_sample VALUES (3,'0x53514C20536572766572') GO --Convert the ntext data type column to varbinary SELECT [Col_ID],CONVERT(VARBINARY(MAX),[Col_Ntext]) AS [Col_Ntext] FROM tbl_sample GO --OUTPUT

Msg 529, Level 16, State 2, Line 1

Explicit conversion from data type ntext to varbinary(max) is not allowed.

Ooopps…… I am unable to convert ntext to varbinary data type. What is the solution ? Let me explain the solution step by step.

Step 1 :

First of all, add one varbinary data type column in the table as shown below.

USE tempdb GO ALTER TABLE tbl_sample ADD [Col_Varbinary] VARBINARY(MAX) GO

Step 2 :

Create an update statement using select statement as shown below.

USE tempdb GO SELECT 'UPDATE tbl_sample SET [Col_Varbinary]=' + CONVERT(VARCHAR(MAX),[Col_Ntext]) +' WHERE [Col_ID] =' + CONVERT(VARCHAR(MAX),[Col_ID]) AS [Query] FROM tbl_sample GO --OUTPUT

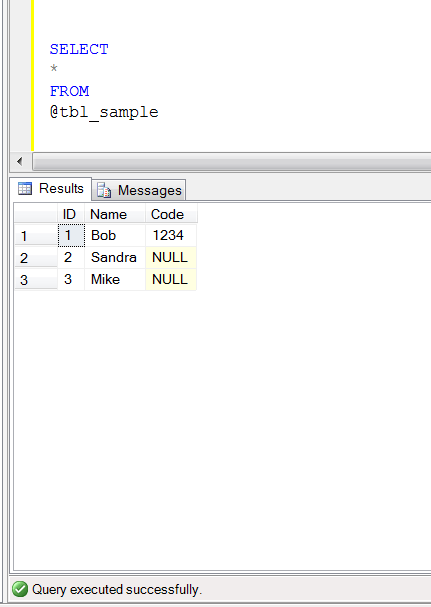

Step 3 :

Once you execute the Step 2 query, you will get the updated statement as a result set as shown above. Just execute that updated statement as shown below.

USE tempdb GO UPDATE tbl_sample SET [Col_Varbinary]=0x7261726573716C2E636F6D WHERE [Col_ID] =1 UPDATE tbl_sample SET [Col_Varbinary]=0x496D72616E WHERE [Col_ID] =2 UPDATE tbl_sample SET [Col_Varbinary]=0x53514C20536572766572 WHERE [Col_ID] =3 GO

Step 4 :

Delete the ntext column of the table (if not required) as shown below. This step is not mandatory.

USE tempdb GO ALTER TABLE tbl_sample DROP COLUMN [Col_Ntext] GO

Step 5 :

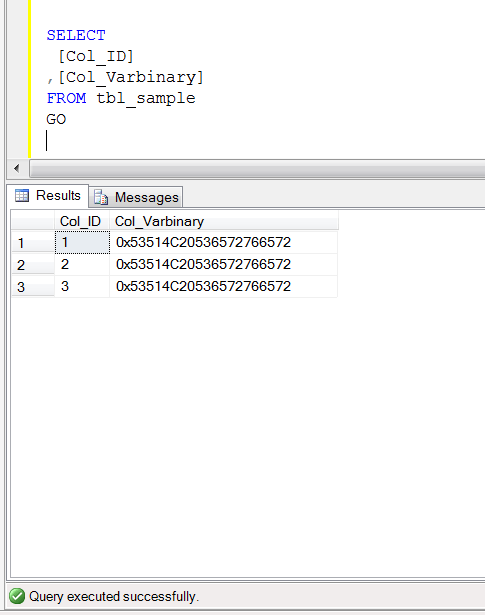

Now, browse the table and you can view that you successfully converted ntext data type to varbinary data type.

USE tempdb GO SELECT [Col_ID] ,[Col_Varbinary] FROM tbl_sample GO